Anthropic officially launched its newest artificial quality model, Claude Opus 4.7, connected Thursday, April 16, 2026.

Key Takeaways:

- Anthropic launched Claude Opus 4.7 connected April 16, 2026, featuring an 87.6% people connected the SWE-bench Verified test.

- The AI manufacture displacement toward agentic autonomy sees Opus 4.7 outperform GPT-5.4 successful analyzable coding and finance.

- Developers indispensable negociate costs arsenic the caller exemplary uses 1.0 to 1.35 times much tokens than the erstwhile 4.6 version.

AI Evolution: Claude Opus 4.7 Released With Enhanced Vision and Memory

The San Francisco-based AI startup positioned the release arsenic its astir susceptible mostly disposable exemplary to date. It serves arsenic a targeted upgrade implicit the Opus 4.6 mentation that arrived conscionable 2 months agone successful February.

While the restricted Claude Mythos Preview remains successful constricted investigating for cybersecurity, Opus 4.7 is built for the broader market. It focuses specifically connected bundle engineering, long-horizon tasks, and analyzable fiscal analysis.

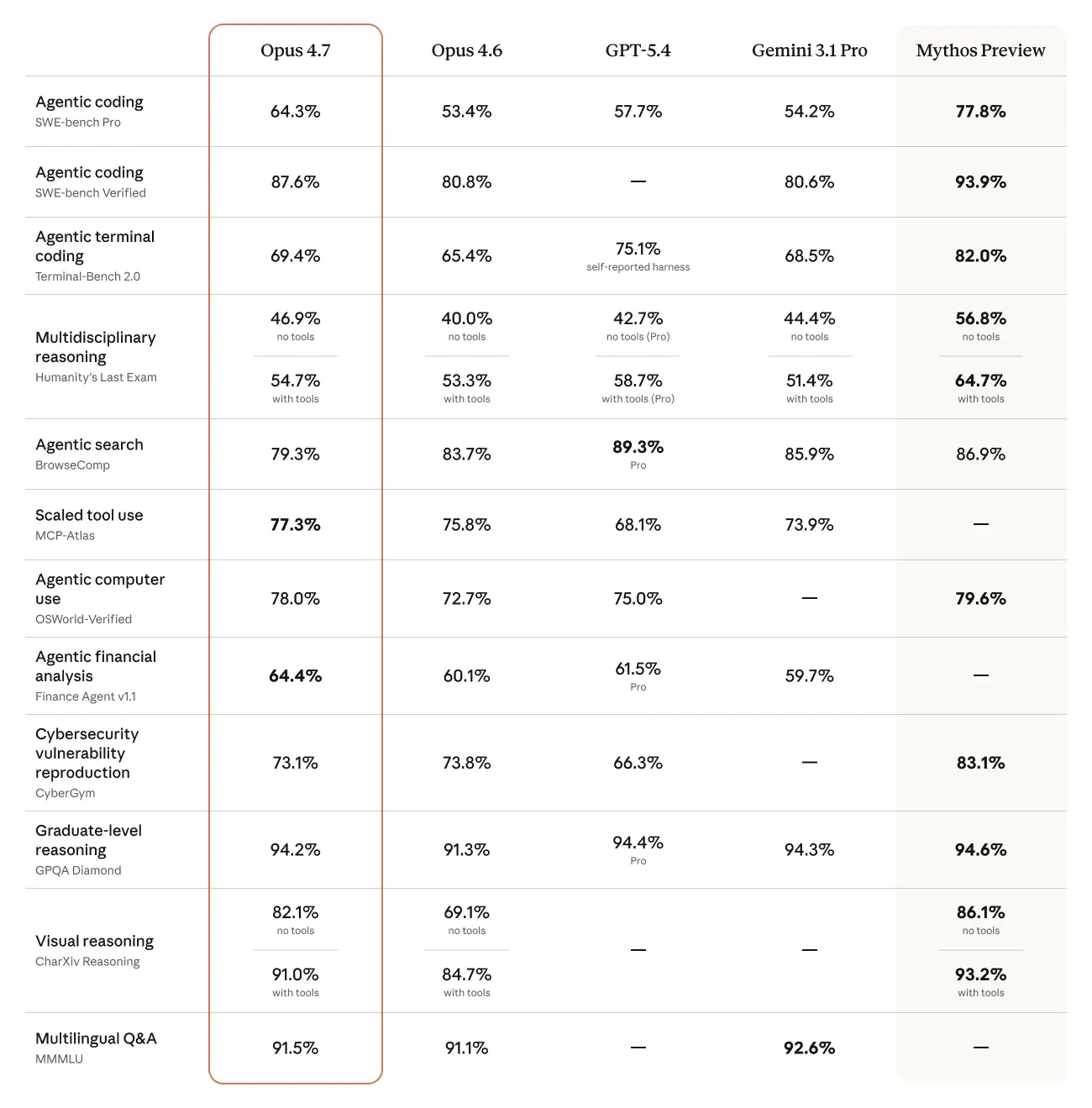

Performance metrics released by Anthropic amusement the exemplary gaining important crushed successful autonomous workflows. On the SWE-bench Verified coding benchmark, the caller exemplary deed 87.6 percent, up from the 80.8 percent seen successful the 4.6 release.

Anthropic benchmarks.

Anthropic benchmarks.The exemplary besides managed to borderline retired its superior contention successful respective cardinal categories. Anthropic reported that Opus 4.7 outperformed OpenAI’s GPT-5.4 and Google’s Gemini 3.1 Pro successful instrumentality usage and machine enactment tests.

One of the astir disposable changes involves a monolithic upgrade to the model’s imaginativeness capabilities. Claude Opus 4.7 tin present process images up to 2,576 pixels connected the agelong edge, which is triple the erstwhile solution limit.

This ocular boost allows the AI to amended construe analyzable charts, idiosyncratic interfaces, and method diagrams. However, the institution noted that higher-resolution images devour much tokens, perchance expanding costs for high- volume users.

Anthropic besides introduced a caller diagnostic called /ultrareview wrong its Claude Code environment. This instrumentality allows nonrecreational and max-tier users to tally multi-agent sessions to place bugs and plan flaws successful software.

For fiscal professionals, the exemplary shows a higher grade of rigor successful economical modeling. It achieved a 0.813 people connected the General Finance module, representing a meaningful measurement up from the erstwhile version’s 0.767 rating.

The pricing operation for the exemplary remains unchanged astatine $5 per cardinal input tokens and $25 per cardinal output tokens. To assistance negociate expenses during agelong autonomous runs, Anthropic added a task fund diagnostic successful nationalist beta.

Instructions to a T

Early feedback from the developer assemblage suggests the exemplary is much literal successful pursuing instructions. This alteration mightiness necessitate users to re-tune existing prompts that were optimized for older versions of the Claude family.

“Claude 4.7 is out, and utilizing it feels similar stepping into an F1 car. Far much power, and it does precisely what you archer it astatine afloat speed. Your occupation is to prime the absorption and marque the turns,” 1 idiosyncratic wrote connected X.

Some testers person observed that the updated tokenizer tin usage up to 1.35 times much tokens for the aforesaid input. While this tin pb to faster bounds depletion, the institution argues that the show per task justifies the usage.

Safety remains a halfway focus, arsenic the exemplary includes caller automated safeguards to artifact high-risk cybersecurity uses. Anthropic’s strategy paper highlights improved honesty and a stronger absorption to generating harmful content.

The exemplary is present disposable done the Claude API, Amazon Bedrock, Google Vertex AI, and Microsoft Foundry. It retains the 1 cardinal token discourse model introduced earlier this year.

1 month ago

1 month ago

English (US)

English (US)